The typical scientific process is to come up with a hypothesis on how something works, and then compare the consequences of the hypothesis with experimental observations. In neuroscience, this often implies breaking a complex system (e.g., the brain) to separate parts, studying them in isolation (or in a reduced setting) and then hypothesizing a circuit in which these parts are connected together to form a working whole.

The problem is that when experiments are done in the non-reduced setting, these parts often “misbehave” and change their properties. For instance, probing neurons in the visual cortex with sinusoidal grating does not ensure we know how they respond to natural stimuli. Noticing that a neuron in prefrontal cortex preferentially activates in response to the memory of a certain object does not ensure it will do so for the same object in a different context.

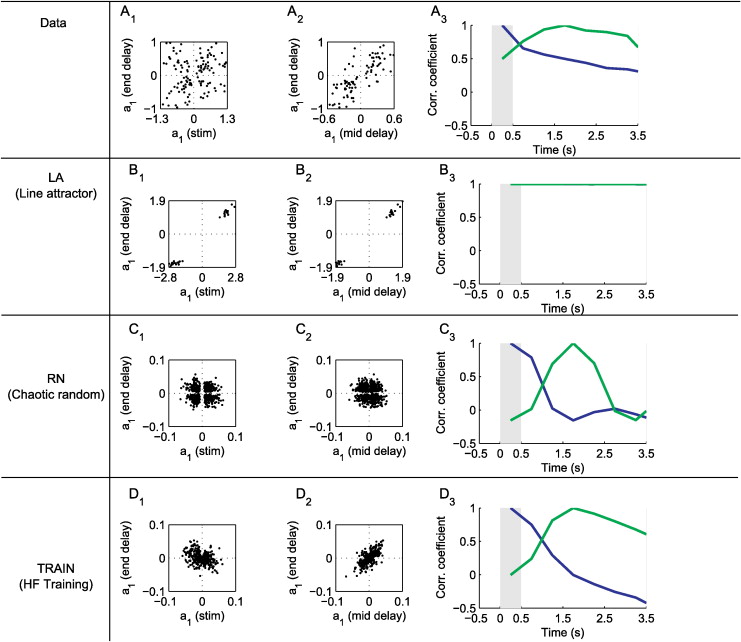

This behavior is very different than that of designed circuits, in which every element has a well-defined function. A recent, alternative, approach to modeling the brain is to train neural networks to perform a certain task, without specifying how it should solve it. It turns out that the statistics of artificial neural activity from such networks have some resemblance to those observed in the brain. This was shown in visual cortex, prefrontal cortex, motor cortex and more.

Because the artificial networks are very artificial, it’s not clear what exactly should be compared between model and experiment. Is it the single-neuron activity? Synaptic connectivity? Population dynamics?

From fixed points to chaos: three models of delayed discrimination

Representational drift as a result of implicit regularization